ARC-AGI-3: What Interactive Reasoning Benchmarks Change for Agent Design

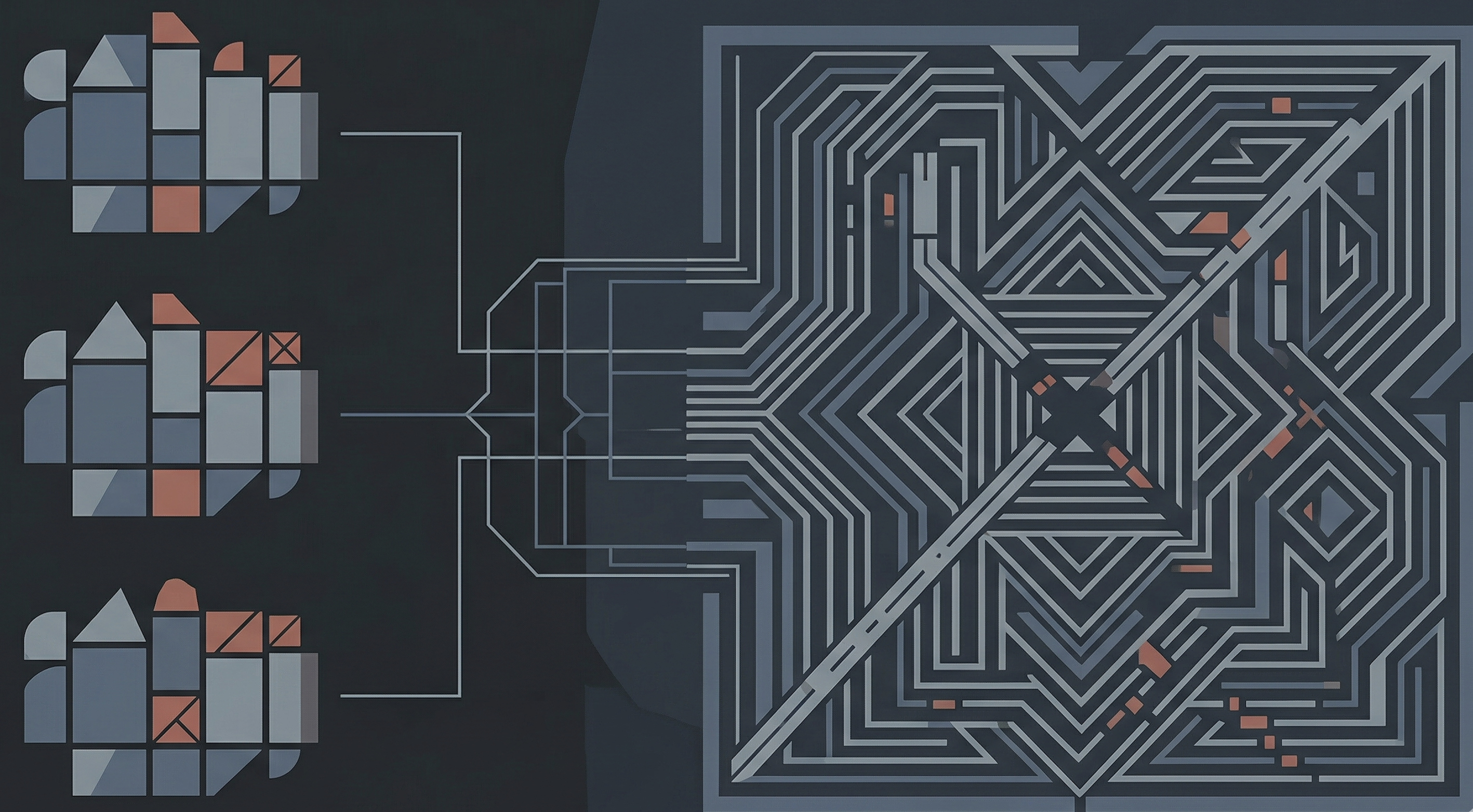

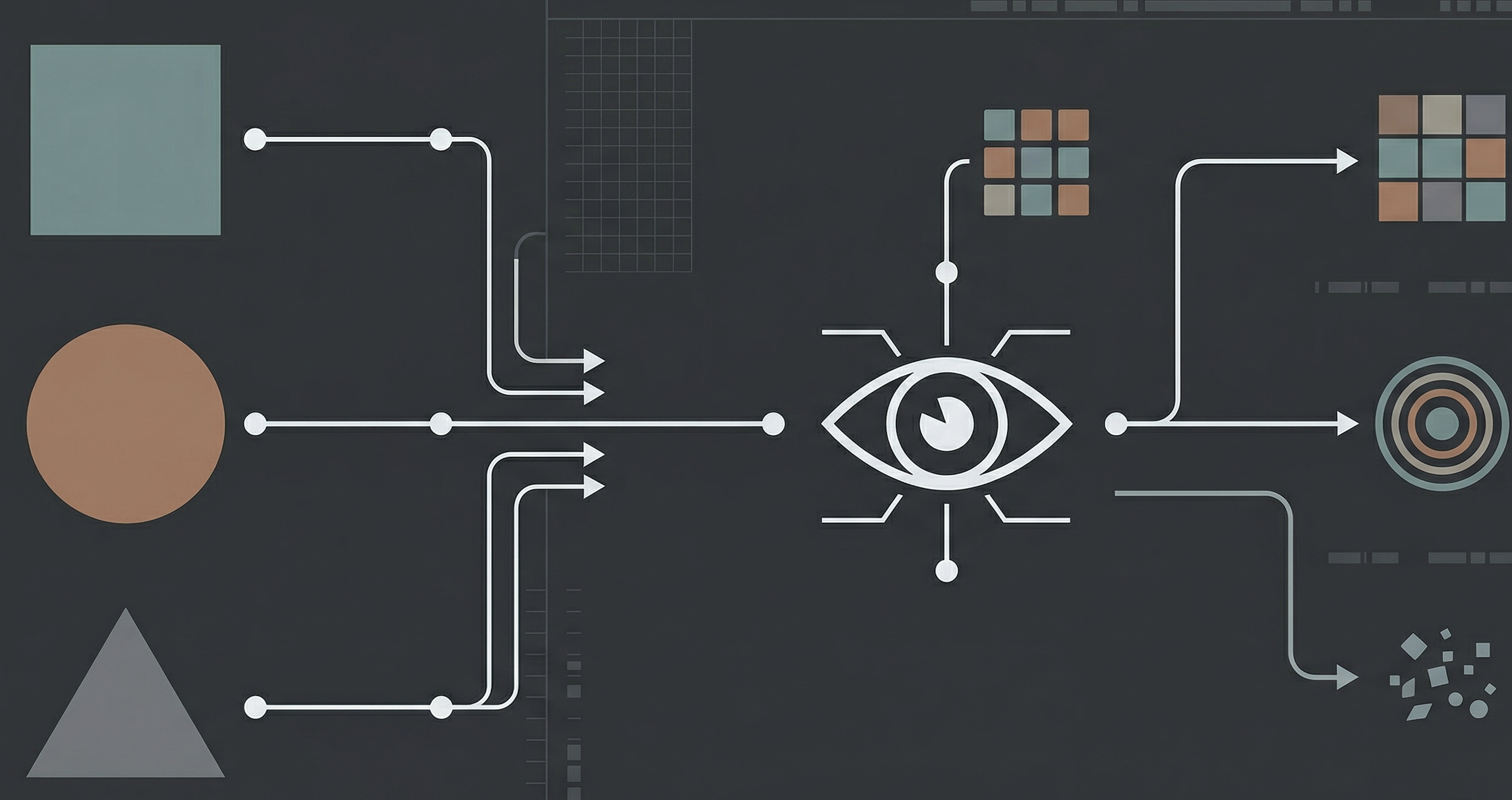

ARC-AGI-3 matters because it turns evaluation into an environment-navigation problem instead of a static puzzle. In my early experiments, the interesting part was not whether an agent could eventually solve a task, but how it observed state, formed hypotheses, recovered from bad actions, and controlled action count. This article breaks down what ARC-AGI-3 is testing, why action efficiency changes the engineering problem, and where stateful agent frameworks help or hurt.